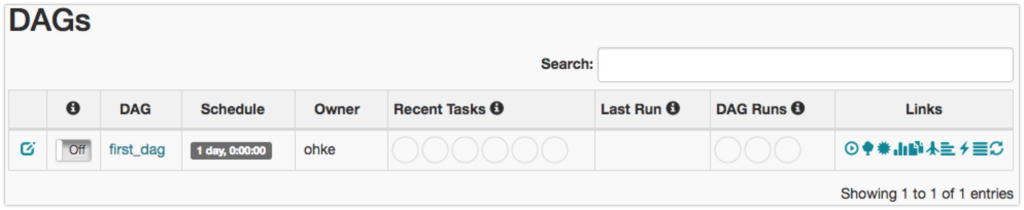

Now that we are familiar with the terms, let's get started. Airflow uses SqlAlchemy and Object Relational Mapping (ORM) written in Python to connect to the metadata database. Metadata Database: Stores the Airflow states.Kubernete s: Provides a way to run Airflow tasks on Kubernetes, Kubernetes launch a new pod for each task.For CeleryExecutor, one needs to set up a queue (Redis, RabbitMQ or any other task broker supported by Celery) on which all the celery workers running keep on polling for any new tasks to run Celery: An asynchronous task queue/job queue based on distributed message passing.Local: Runs tasks by spawning processes in a controlled fashion in different modes.Sequential: Runs one task instance at a time.You can find package information and changelog for the provider in the documentation. In this example we use MySQL, but airflow provides operators to connect to most databases. We can use Airflow to run the SQL script every day. In order to enable it, you need to add -build-arg DOCKERCONTEXTFILESdocker-context-files build arg when you build the image. All classes for this provider package are in python package. This SQL script performs data aggregation over the previous day’s data from event table and stores this data in another eventstats table. When customizing the image, you can optionally make Airflow install custom binaries or provide custom configuration for your pip in docker-context-files. It uses the DAGs object to decide what tasks need to be run, when, and where. Release: 3.7.1 Docker Provider package This is a provider package for docker provider. Scheduler: Schedules the jobs or orchestrates the tasks.Web Server: It is the UI of airflow, it also allows us to manage users, roles, and different configurations for the Airflow setup.Following is an example docker-compose file for a worker container deployed in AWS EC2 instance in ECS cluster. Replace with the container ID you noted down earlier. Being familiar with Apache Airflow and Docker concepts will be an advantage to follow this article. Next, you can create a new user in Airflow by running the following command: docker exec -it airflow users create -username admin -password admin -firstname First -lastname Last -role Admin -email. DAG (Directed Acyclic Graph): A set of tasks with an execution order. In this article we will be talking about how to deploy Apache Airflow using Docker by keep room to scale out further.The states could be running, success, failed, skipped, and up for retry. Task instance: An individual run of a single task.Task or Operator: A defined unit of work.It ensures that the jobs are ordered correctly based on dependencies and also manages the allocation of resources and failures.īefore going forward, let's get familiar with the terms: Before that, let's get a quick idea about the airflow and some of its terms.Īirflow is a workflow engine which is responsible for managing and scheduling running jobs and data pipelines. In this article, we are going to run the sample dynamic DAG using docker.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed